Where to use AI search in Condens

There are four places where AI search and analysis lives in Condens:

Ask your Sessions to search raw transcripts and notes at Session, Project, or Workspace level

Participant profiles to search across all sessions from a specific participant

Whiteboards to group and explore tagged highlights with AI clustering and Find similar Highlights

Insights Magazine to search across published, validated findings

Search across raw data in Sessions

Ask your Sessions, or simply put, AI search on raw data, lets you search across your raw session transcripts and notes. You can use it at three levels:

1. Within a single Session

When you're inside a session, you're already working with a focused, defined set of data. Search here is straightforward; you can look for specific keywords or ask a natural language question, and the results are scoped to that session.

2. Across a Project

Searching at project level covers all sessions within that project. Since the data set is wider, it's good practice to combine your search with filters to keep your results relevant. For example, by participant type, date, or any custom metadata fields you've set up.

3. Across your workspace

Workspace-level search is global AI search across all your raw session data. It's the most powerful scope and the one people find most useful for cross-study questions. Because the sample can be very wide, combining it with classic search filters makes a real difference to the quality of your results.

Tips on writing good prompts for AI searches on raw data

What works well are specific questions with concrete answers.

Within a single Session

Good questions at this level tend to be detail-oriented — drilling into a specific moment, sentiment, or topic within that conversation:

✅ "What did this participant say about the checkout flow?"

✅ "Were there any moments where the user expressed frustration?"

✅ "What was the overall sentiment toward the new feature?"

✅ "Did the participant mention any competitors?"

✅ "Where did the user struggle during the task?"

Across a Project or Workspace

At this level, good questions look for patterns, themes, and signals across multiple participants:

✅ "Who mentioned issues with the checkout flow?"

✅ "Which participants asked for a dark mode?"

✅ "Where did users describe feeling confused during onboarding?"

✅ "Which sessions included feedback about waiting for results to load?"

✅ "Did anyone talk about switching from a competitor, and what did they say?"

✅ "What recurring themes came up around the new dashboard?"

Questions that require interpretation or a generated conclusion won't yield the same kind of results:

❌ "What are our biggest usability problems?"

❌ "What do users think about our collaboration features?"

❌ "What should we improve first?"

You may still get some results for these. The AI can point you to relevant moments in transcripts, but you won't get a generated answer, ranking, or recommendation. We believe that kind of synthesis should remain in the researchers' hands, and the evidence AI search surfaces is what helps you do it.

Tips for better results

Searching your entire workspace can work, but the more focused your data set, the more relevant your results. Before you search, filter by project, session, or custom metadata fields to scope it to what's actually relevant.

Use specific feature, flow, or product names rather than general terms. "Profile setup" will surface more relevant results than "onboarding" if that's the language your participants used.

If results feel thin or off, try rephrasing. A slightly different question can surface different moments, especially if your participants used varied language to describe the same thing.

AI search surfaces as much relevant evidence as it can, and everything links back to its source. But it doesn't guarantee it will catch every instance. Use it to get oriented and find the key evidence, then follow the source links to verify.

AI clustering on the Whiteboard

Once you've tagged highlights and pulled them onto a Whiteboard, AI-powered affinity mapping helps you group and explore them by theme, sentiment, or any framing you define. This is where synthesis starts, but you're working with evidence you've already selected, not raw transcripts.

How to write a good clustering prompt

Clustering prompts can carry more context than a session search question. You're not trying to match keywords; you're giving the AI a frame for how to think about the material you've brought together.

Simple, open-ended prompts work well when you want to explore broadly:

"Group these by the type of problem described."

"I want to understand the main pain points customers have."

"What are the main suggestions for improvement and areas of satisfaction in this feedback?"

"Organize these by how users feel about the experience overall."

More detailed prompts work well when you have a specific research context:

"These highlights are from usability tests on our onboarding flow. Group the findings into areas of confusion, smooth interactions, and overall task success."

"These highlights relate to users' motivations, frustrations, and product interaction habits. I want to understand common behaviors, recurring pain points, and key motivations."

"This is feedback from enterprise customers on a B2B workflow tool. Identify user expectations, frustrations, and feature requests to find areas for improvement and drive feature adoption."

"These are highlights from discovery interviews with first-time users. Group them by what surprised people, what felt familiar, and what felt missing."

Tips for better results with AI-powered affinity mapping

Try reapplying clustering with different prompts to see how the groupings shift. It's a good way to look at the same evidence from multiple angles.

Move from broad to specific. Start with an open-ended prompt to get an overview, then reapply with a more focused one to dig into a particular theme or dimension.

If groupings feel off, adjust the prompt rather than the highlights. The clustering reflects how you've framed the question as much as what's in the data. A clearer or more specific prompt usually gets you closer to what you're looking for.

Use Find similar highlights to expand a cluster. Once a theme starts to take shape, use Find similar highlights inside that cluster to pull in related evidence from elsewhere in your repository that you may have missed or tagged differently.

Use AI search on published findings and reports

The Insights Magazine is where your team publishes final, validated research findings. AI search here works differently from session search: you're searching across concluded, documented insights, not raw transcripts. It's designed to work for stakeholders who want to easily explore and surface insights, and may not necessarily know the exact keywords or metadata behind this information.

This is the right place for:

Exploring what your team already knows

Ask broader questions about your accumulated research knowledge:"What have we learned about enterprise onboarding?"

"Is there any research on our mobile checkout flow?"

What do we know about user trust?"

Checking for research gaps

The Magazine is also useful for understanding what you don't have documented yet:

"Do we have any findings on how power users customize their workflow?"

"Is there research on why customers downgrade their plan?"

"What do we know about how teams onboard new members?"

Preparing for stakeholder conversations

"What findings do we have about customer satisfaction with support?"

"What has research shown about the most requested integrations?"

"What do we know about how customers measure ROI from the product?"

These questions work here because the answers have already been synthesized and documented as findings. You're not asking AI to form a conclusion; you're asking it to find one that already exists in your knowledge base.

Tips for better results with the Magazine AI search

Phrase questions as knowledge queries, not analysis requests. "What do we know about X?" and "What have we learned about Y?" tend to work better than "What should we do about X?" because you're looking for documented findings, not asking AI to form a new opinion.

Search the Magazine before starting new research. It's a quick way to check whether a question has already been answered, or whether existing findings can inform your next study before you run it.

If your search doesn't return what you're looking for:

The Magazine will suggest alternative phrasings to help you get there. Try them; often it's just a matter of how the question is framed, not whether the information exists.

If there genuinely is no relevant research published, that's useful to know too. It tells you where your knowledge gaps are.

Magazine AI search in Slack and Teams

You can query your Insights Magazine directly from Slack or Microsoft Teams without leaving the conversation. This is useful when a stakeholder asks a research question mid-discussion and you want to surface a validated finding on the spot, or when you want to make research accessible to teammates who don't work in Condens day to day.

The search works the same way as in the Magazine itself: it searches across your published findings and links back to the source. For setup instructions and full details, see the Slack integration article and Teams integration article.

AI search via Condens MCP Server

If your team uses AI tools like Claude or ChatGPT, you can connect them to your Insights Magazine through the Condens MCP server. This lets you query your validated research findings directly from those tools, making it easy to bring research context into wherever your team is already working. Furthermore, you can combine Condens data with other sources, like Notion, Jira, HubSpot, and many more, all within the same conversation.

For a full list of MCP-compatible tools and best ways to use these connections, check out the article below:

How AI search works and what to expect

AI search builds lets you ask questions in natural language instead of constructing keyword queries. Rather than matching exact terms, it looks for content that matches the meaning of what you're asking and surfaces results backed by evidence.

1. You stay in control

Whatever AI search surfaces, you can always trace it back to the original moment in your data. Results are linked to their source so you can read them in context and verify them yourself. Where AI makes suggestions, nothing is applied without your input: you can accept, edit, or discard anything before it becomes part of your research.

2. It retrieves, it doesn't interpret

When you ask a question, the AI looks through your data for moments, quotes, and content that are relevant to your question. It doesn't draw conclusions, form recommendations, or synthesize patterns on its own.

This means it works best when your question has a specific, concrete answer that exists somewhere in your data. The more you're asking it to interpret or conclude, the less reliable the results will be.

3. It won't make things up

One thing worth knowing: the AI won't make something up just to give you an answer. If there isn't enough relevant data to respond to your question, it will tell you that directly. That's intentional. A non-result is still useful information. It tells you either that the topic hasn't come up in your research, or that you need to adjust how you're asking.

4. Some questions are bigger than a search bar

Some questions are genuinely large enough to be research projects on their own. "What are our biggest product problems?" or "What should we prioritize this quarter?" won't yield useful results unless someone in a Session explicitly stated those answers or you documented them in an Artifact. That's because the AI is looking for that answer in your data, not forming one. Those questions are better tackled through your analysis and synthesis work, where you can bring together evidence and draw your own conclusions.

Used for the right things (like finding who mentioned something, surfacing specific feedback, pulling together evidence around a concrete topic), AI search is fast and genuinely useful.

Expert search with filters and queries

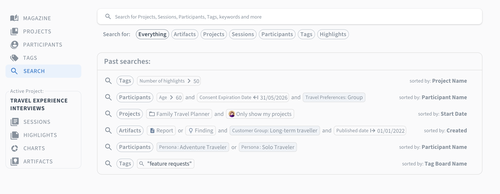

You don't have to use AI search to find things in Condens. is available at every level and gives you full control over your query.

You can build complex searches using multiple keywords, apply filters, use and/or logic, and change sorting options to get a comprehensive view of your results. You can search across everything or scope it specifically to artifacts, projects, sessions, participants, tags, or highlights.

It's a good starting point when you know exactly what you're looking for, or when you want to combine precise filtering with an AI search on top.