Understanding and Communicating Your User Research Impact

What is user research impact? Ultimately, it’s a qualitative narrative researchers use to achieve job security, promotion, or career mobility.

Research impact is hard to measure and can be elusive. The research impact narrative is how we can tell the story of why our work matters.

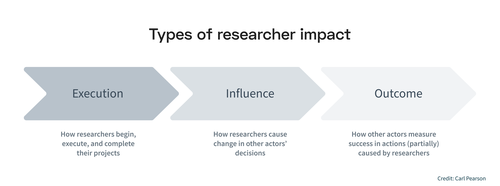

Types of user research impact

The way I develop my narrative centers around the kinds of impact in UX research: execution, influence, and outcome.

Execution impact is how researchers do the research itself

Influence impact is how stakeholders change their decisions based on research insights

Outcome impact is how stakeholders measure their success in the organization.

Even though business outcome metrics (revenue, user growth, etc.) are quantified, this doesn’t always make sense for UX researchers. One reason UX research impact is qualitative is that it is focused on influence impact. This is the quintessential impact of UX researchers and the final phase that UX researchers “own” in an organization.

The second reason impact is qualitative is that it doesn’t make sense to quantify execution or influence.

The number of studies run doesn’t matter as much as the influence one may have led to. The number of roadmaps influenced is not as important as the nature and scope of each roadmap.

Ultimately, research ICs must focus on the qualitative narrative over the quantification of impact to show how they made an impact within an organization.

A company typically has a set number of times where performance is evaluated. I’ve worked at companies where my managers didn’t really care much about this — it was a casual and uninformative conversation. I’ve worked at places where I stressed all year about it and I was stack ranked against my peers. Where your role falls in that spectrum should tell you how much you need to care about your impact narrative.

Impact narratives are also useful when crafting resumes and case studies when seeking a new job. The goals and strategy here are a bit different than a performance review. I’ll focus primarily on how I use the components of research impact for internal performance reviews, but I’ll make some notes relevant about job-seeking impact narratives.

Ultimately, you’ll get better job-seeking narratives based on the craft and care you put into your job performance. It starts with doing effective research and then documenting that process.

Not easy to create & maintain

It’s impossible to write a good research impact narrative without having done solid research work. Some of this is outside of a researcher’s control, but it’s important to optimally set up your context.

This starts with choosing the right team. When the market is tight, you can’t always be as choosey, but try to make your job interviews a two-way street. A hiring manager may assess your skills, but you should assess how easily you’ll be able to do good work.

Do they have a solid research operations program to help you be efficient? Can they name examples of when product teams were highly influenced by research work? Think of the things you’d want to be true for your ideal working context, and don’t be afraid to see how close a team will come to those.

When you’re in a research team, it’s important to focus on work that is not only important, but also has a high likelihood of activation (see more in this forthcoming book).

If two teams need important research work done, but only one team has a product manager that is likely to listen to your findings, then that becomes an easy choice for prioritization. It’s not enough to have work that is important to make research impact — stakeholders must be ready to act as well.

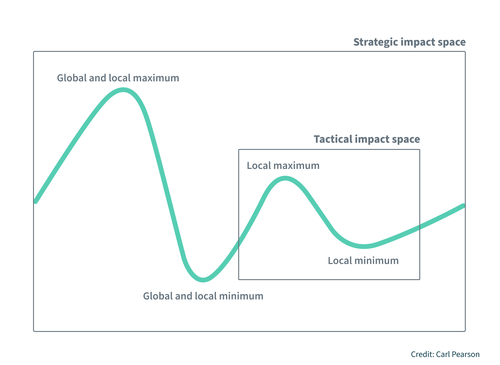

Research that has a strategic impact also typically begins by designing the plan with a large scope.

Occasionally research questions with large scopes will come to you or be asked by stakeholders, but I often find stakeholders are focused on solving their immediate problem (rightfully so). For that reason, it’s important to conduct at least some work that no one is asking for. This enables you to take on a problem with a huge scope that sets the stage of strategic impact down the road.

Accessing and organizing research impact along the way

Bringing great work to your impact narrative requires you to document work effectively. It’s easy to know how you execute (number of projects, readouts, etc.), but it’s hard to document influence and outcome impact.

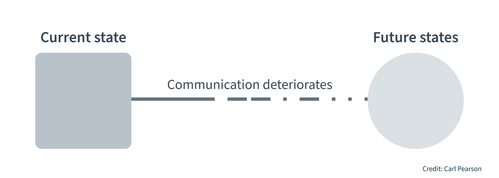

Especially as projects get more complex, you have to navigate the ways communication can deteriorate over time. If you’re lucky, stakeholders will cite your work directly in strategy documents or product requirements documents. It can still take some effort to track those down and keep awareness of where you’ve been cited. If you’re less lucky, your influence will not be visible in any writing or documentation at all. It’s important to reach out to stakeholders and follow up to learn about your influence.

I use a formal tracker (template) to organize my impact information. It’s fairly simple, but naturally develops your impact narrative for each project as you track the ways research impacts your organization.

When you’re collecting impact, it’s more important to just capture the information rather than try to organize it perfectly from the start. Connections with stakeholders can weaken, chat retention policies can eat conversations, etc.

While I have a formal tracker, a lot of the information starts from a quick screenshot tossed into a folder called “impact”. I find it easier to have a messy landing pad so it takes almost no time to ensure the impact is saved. If you are too rigid in saving the impact, you may miss some of it because you have a competing priority at the moment.

Defining success

Success will look different for every organization and every manager. It’s valuable to take some time to understand what you're expected to do in your working context. Some teams have formalized career matrices for each title and level — this is excellent because it’s a concrete roadmap for your success.

Some teams have no such information and you’ll need to set aside time with your manager to define the expectations. It’s worth it to focus time here so you don’t spend time making an impact in a way that isn’t important in the way your work will be judged. Even with a formal career matrix, there will be some individual preference for managers that will contribute to how you’re evaluated.

Think about the kinds of impact that matter most. Typically, influence impact (citations in documents or evidence of shaping team direction) is very important.

Some teams may need to show more direct outcome impact (revenue, user growth) tied to their projects — this kind of impact is challenging to track so it’s good to know if you need to put in the extra effort here.

There may be parallel kinds of impact like showing personal growth or supporting other ICs through things like teaching skills or helping out when another researcher is stuck. Again, these will all be manager and team dependent, so take time to map it out.

Lastly, think about what you value. What are you trying to do? Get a promotion? Grow your skills? Just maintain a steady pace? Knowing this will help you calibrate against the formal or informal career matrix, to show if and where you need to push yourself.

You’ll be in a great place to develop your research narrative once you have defined success, executed solid research, and effectively tracked its impact.

Developing your research impact narrative

Your research impact narrative is a motivated piece of communication. Aim to be persuasive like you would be in any other research deliverable. You want to prove a specific point in a story, not document every single thing you’ve done. Your impact tracker will likely have more in it than you’ll ultimately use in the narrative. Including less crucial details will only serve to distract from the strongest elements of your narrative without driving more value. Your goal is not to say how much work you did, or how hard it was, but why your work mattered to the organization.

Structurally, I have a short “tl;dr” section to summarize the major points of impact. Below that, I have a bigger outline of the projects I did that led to impact and other important work (like supporting research teammates or external presentations).

I like to consider the balance of the types of research impact in my narrative: execution, influence, and outcome. These are the three elements that a narrative arc for research impact follows, but they don’t typically make up an even third for each type.

Execution

Execution is the least important impact to the business and the least important to me when my work is strong. It’s still important to track it to set the stage for other impact types and for some diagnostic reasons.

In my tracker, I naturally can see the amount of projects I complete each quarter. That number has very little to do with how I write an impact document each review cycle.

About 20% of my projects give me about 80% of my impact over the long term. If I pursued the number of projects per quarter metric, I would probably spend less time being deliberate in what projects I choose to do. That deliberation and understanding of the need for certain projects over others is what has yielded my strongest impact over the years.

My advice would be to focus on the number of projects only if you aren’t sure what constitutes a good project and need to employ a quantity over quality approach. It’s not an ideal situation but you may need to do this if you’re a solo researcher who is also very junior.

Execution includes all ways researchers do and get work. The number of research requests increasing is certainly gratifying - It’s an indication that people like and know your work! But ultimately, it doesn’t help the business at all directly (Sosik would say it’s not impact at all).

I mention inbound project requests to my manager, but I don’t value it highly for writing an impact document. It’s a good indicator that people see value in my work, so I use it to keep a pulse on teams where research is going well and partnerships could be fruitful.

The things I track most often in execution (other than the default number of projects) is where and to whom I shared my findings. I don’t necessarily report these out in aggregate numbers as impact milestones in my narrative, but they set the stage for how I arrived at influencing the team or connecting with business outcomes. I’m unlikely to include a project’s execution in my narrative if there is no influence or outcome impact.

Depending on your team or UX maturity, getting visibility (share outs to executives, publications, etc.) could be of great importance to your manager or team, so it’s something to consider contextually. However, not all teams or managers will value this. That makes it all the more important to understand how value is defined as you consider what kind of impact you want to make.

Execution is important to showcase your craft, but in an impact narrative, it mainly sets the stage for your influence and outcome impact.

Influence

The practice of UX research is not done for the sake of execution. It’s done to de-risk decision-making. The way that happens is influence. For this reason, it’s the archetypical way that researchers are judged on performance. This doesn’t mean execution isn’t important (far from it). Execution is necessary, but insufficient on its own.

Influence impact is much harder to track than execution impact and so much more important to capture for an impact narrative. This is the type of impact I spend the most time and space on in my impact narratives.

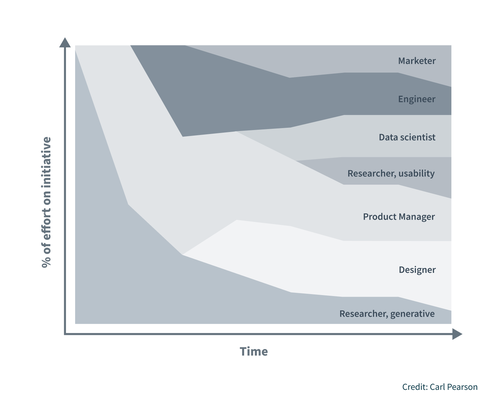

The scope of a researcher’s influence grows with their seniority. While a junior researcher may influence direct fixes in existing roadmaps, more senior researchers are expected to influence multiple product pillars and initiate new lines of product work entirely.

This means influence is essential to practice as a researcher if you want to grow. And if you’re a senior researcher, influence is table stakes for success in your work.

The most concrete elements you can write about are citations in roadmaps or strategy documents. I still will share direct quotes (often from Slack) where people note how findings influenced their thinking, even without a formal citation. This may be especially important where findings lead to a team not doing something — it’s still good influence impact because the team made a different choice due to your input.

Influence impact is what I spent the majority of my time driving towards in my work, tracking throughout the year, and writing about in my research impact narrative.

Outcomes

Outcome impact is hard (and sometimes not possible) to achieve. A mature UX research practice will recognize this and optimize for influence impact. However, outcome impact is great when it can be achieved and measured.

Some companies may expect it more than others, so it’s again important to understand what your organization values when focusing your impact.

Researchers, as a rigorous discipline, are strict with assigning causality in their research work. However, an impact narrative is a story, not an experiment or test. Researchers must claim what they have a hand in.

Even if by the time the outcome impact occurs, an engineer has already built it, a data scientist has tested it, and a product manager has organized it. You still played a key role. Whether it was seeding the basic idea or testing the usability after it was designed. It’s important to own your part in the outcome.

Don’t overstate it and claim you did all the work, just say what role you did play (“I guided the team to launch feature X over feature Y, leading to +10% monthly user growth”).

By the time outcome impact happens, it’s probably much later than when you delivered your insights.

This means you will have to go out of your way to figure out what outcomes occurred. It may feel awkward at first, but it’s a reasonable request and one that (for me) is also driven by a natural curiosity about the work, not just tracking impact.

You can ask things like, “Did XYZ research influence your thinking on our last project? I’m curious to see where my work has been most helpful.” Or, “How did the results for that experiment turn out?”. People are often happy to share more about their work and how it’s going.

One last note on outcome impact: if you’re writing a resume, rather than a research impact narrative, it is more useful to show outcome metrics. It demonstrates your ability to have a sense of the business and what it cares about. Still, don’t overdo it. Metrics that simply state you were responsible for millions of dollars of revenue or massive user growth may be seen with skepticism. Keep your outcome impact grounded and clearly linked to your work.

An example

For my research impact narrative, I spend some time on execution to set context and show my work, but I spend the majority of my time noting how I influenced the team. Some of those influences lead to outcome impact, but it’s normally less than half of my projects that cite direct business outcomes.

Here is an example (partially redacted) from a real impact narrative I wrote. I got solid reviews for this half and did not cite outcome impact:

"I executed a survey experiment about our [feature], a high visibility product area with continuous reviews from the VP of [product team]. My research led to a reimagining of the way we increase [key user attitude] that relies on our unique strength as a [key user attitude]. This [feature] approach is now a major path of new product development and a ‘big bet’ in our product roadmap for [year] after I championed these findings to research, product, and design directors/leads. The research was also chosen by leadership for me to present at broad forums like the [pillar] Research All Hands and [product team] Leads review meeting."

Notice the following:

I set context with execution impact and stating the importance to our organization.

I focus on how my research changed the team’s thinking and how it led to different actions.

I also noted some points of visibility, as this was valued by the team.

Like I said, focus on what your team values. Your research impact narrative is not an exhaustive list of everything you did. Share what makes a compelling case for why your research mattered to the organization you work in and cut what doesn’t drive that story.

Getting support

If this seems overwhelming, you’re not alone. It’s something that gets easier with time and the more you’re able to find support amongst others.

Be clear with your manager about what impact you're focusing on and why (promotion, skill development, etc.). The more they know, the more they can help you achieve those goals. Similarly, other people in adjacent and higher levels in your research team can also share insights for how they’ve achieved success through their narratives. The more you talk with others and learn, the better equipped you’ll be.

Some things, like career development for a new role, may be too sensitive to share with a manager. It’s important to cultivate a network of people outside of wherever you work right now — don’t keep all your eggs in one basket. People with an outside perspective can help you calibrate to goals that go beyond your current role and give an outlet for discussing things that may feel too risky to share with coworkers or managers.

Whatever your goals are now, don’t go it alone. Find the right people to share with and it will make the process much easier.

Conclusion

It’s easy to get tied up in the demand to focus on hard numbers like product management or finance. Research doesn’t function in the same ways as many other roles. We primarily own influence, which is much harder (or impossible) to quantify like user growth and revenue growth.

As researchers, we need to craft compelling research impact narratives to showcase why our work matters to an organization. Sometimes this involves outcome impact, but it primarily means showing how what we executed influenced teams to make better decisions.

If you set yourself up to do solid research and effectively track the impact you make, then you can develop a research impact narrative that leads to achieving job security, a promotion, or career mobility.